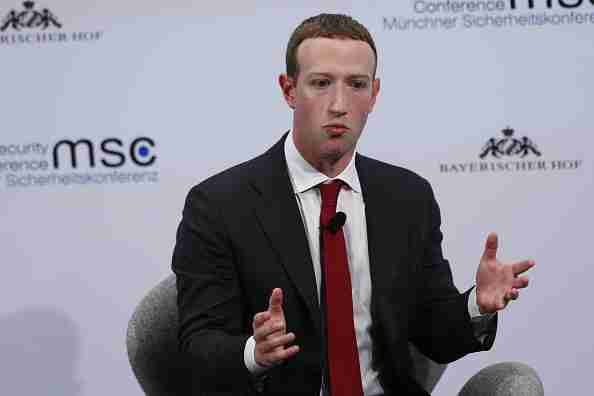

Mark Zuckerberg has sacrificed safety in his pursuit of a mass-market metaverse

During this week’s Super Bowl 2022, Meta aired a commercial for its Quest 2 virtual reality headset that seems to have fallen flat with most viewers but perhaps provides a more honest look at the platform’s dark side.

Back when Mark Zuckerberg announced Facebook’s rebrand as Meta last year, he set out a vision of a virtual reality ‘metaverse’ where billions of users work, play and socialize. It’s a vision that would take virtual reality far beyond the enthusiast gaming market it has targeted to date, and require VR to become as ubiquitous as mobile phones or PCs are today.

Meta has yet to deliver on that vision, but it’s making progress. Its Meta Quest 2 headset has a claim to being the first truly mass market headset, providing access to Mark Zuckerberg’s vision of the metaverse for $299. It is reported to have sold more than 10 million units.

Consumers using a Quest 2 for the first time will realize that Meta is building something unlike the gaming-focused headsets we’ve seen to date, instead focusing on social features and ease of access. This focus on social interaction brings into view new risks resembling those posed by abuse on existing social platforms like Facebook. Indeed, Mark Zuckerberg acknowledged these risks when he promised “privacy and safety need to be built into the Metaverse from day one.”

Meta’s repeated decisions to put profit before people, exposed by the Center for Countering Digital Hate’s (CCDH) own research and by whistleblowers including Frances Haugen, means that there is substance to the skepticism expressed when Mr Zuckerberg launched his new platform. But researchers face new challenges investigating virtual reality platforms, where harms unfold in real-time and from a first-person perspective.

That’s why CCDH’s investigation of Meta’s VR platform takes a typical user’s perspective as its starting point, using the world’s most popular VR headset, the Meta Quest 2, and its most popular dedicated social app, VRChat.

This revealed a VR platform that is simply unsafe, with one incident of abuse or harassment recorded every 7 minutes during researchers’ use of the platform. And it revealed the extent to which Meta is compromising on safety in its drive to make Mark Zuckerberg’s vision of mass market VR a reality.

These compromises on safety begin the moment a user enters the metaverse. By default, anyone who straps a Quest 2 headset to their face is logged in as the user who set it up, with no identity or age checks required. This explains why so many minors are able to access VR, many of them apparently below Meta’s stated minimum age of 13.

It’s why the research found so many incidents of abuse and harassment on the platform that involved under-18s, and it’s one reason why CCDH’s research prompted the UK’s data regulator to seek a meeting with Meta about parental controls on its Quest 2 headset. Without these controls, Meta’s supposed age limit of 13+ is strictly theoretical.

Once inside the metaverse, it becomes apparent that Meta’s reporting system is not up to the task of allowing users to capture and report abusive behavior. Meta’s policies on abuse state that they apply to its users’ behavior in all metaverse apps, so here at CCDH we investigated the platform’s most popular dedicated social app, VRChat.

VRChat allows users to join 3D chat rooms that it refers to as “worlds,” some of most popular of which have been fashioned by users to resemble bars or clubs. Users joining these worlds communicate by voice, and are represented by customizable 3D avatars that match their movements in the real world. The app is popular enough that the Meta Quest Twitter account promoted it as a selling point for its headsets in the run up to Christmas last year.

User interactions in VRChat are extremely chaotic. Typical worlds can contain nearly twenty users simultaneously speaking over each other and interacting, appearing as avatars of wildly different shapes and sizes drawn from internet culture, Japanese manga and film.

Reporting is baked into the operating system of Meta’s headsets, and can be reached at any time by hitting the menu button on one of its handheld controllers. Hitting that button initiates a short recording of the user’s view, after which they must provide the Quest username they are reporting.

This system has three key failings.

First, abusive behavior in chaotic environments like VRChat can be intense but fleeting, meaning some incidents can take place before a user has a chance to hit the report button and record them. Meta’s headset does not keep retrospective footage for reports outside of its own first-party social apps.

Second, users in popular third-party apps are able to obscure their usernames, even where they are identified as being logged in using an Oculus profile. This makes some users impossible to report, and indeed out of 100 incidents recorded in our research, just 51 could be successfully reported. Meta’s first line of defense against abuse simply does not work.

Third, Meta provides no feedback to users on whether a report is being investigated, or what action it has resulted in. Meta states that violations of its policies can result in suspensions or bans, but we received no feedback on the 51 reports we successfully filed.

The consequence of these failures is that abuse is rife in the metaverse. We saw minors being exposed to graphic sexual content. We saw bullying, sexual harassment and abuse of other users, including minors. We even saw minors being groomed to repeat racist slurs and extremist talking points.

We recorded this footage to evidence the harms taking place in VR. Most of the content has been too extreme to air publicly, although excerpts can be seen in recent coverage of this research.

This behavior was not exceptional, it was the norm. During our time researching VRChat, 11 hours and 30 minutes of recordings, we found 100 potential violations of Meta’s policies for VR – the equivalent to once every 7 minutes.

Meta recently announced it would address cases of harassment in its own apps by implementing a 4ft “personal boundary”. But the vast majority of the 100 incidents we recorded did not involve virtual “contact” between users.

The metaverse is a flexible and creative environment, but that means abuse can take many forms. To manage these harms, Meta must do something it has proved incapable of with its existing social platforms: it must prioritize safety over the ruthless pursuit of profit.

Immediately, it must implement basic age controls. Our research shows that minors are being exposed to serious harm on Meta’s platform because accessing the metaverse is as simple as putting on a headset. Applying identity or age checks may be inconvenient, but it’s essential if Meta is to manage this serious category of VR harms.

Meta also must radically improve its complaints reporting system and set out clear consequences for abuse. Effective reporting systems matched by investment in moderation teams is absolutely necessary given the complexity of abuse in VR. This must be backed by stating the consequences for abuse and clearly communicating them to users. This means setting out the circumstances in which Meta will put safety ahead of profit and cut off an abusive user’s access to its services, including transactions in its VR app store. Consumer information is the first step. There also needs to be system and complaint information made available to regulators, with anonymized data available to independent academics and civil society (with appropriate privacy protections in place). This data will be essential for understanding emerging and common problems and trends, including problematic accounts and behavior, and the effectiveness of any safety interventions that Meta has applied. Without this we would have all the promises of accountability with, what many Facebook users experience, complaints falling into a black hole.

Finally, Meta must follow through on its commitment to enforcing its policies across all apps by setting minimum safety standards for third-party apps in its VR app store. Meta expects third-party apps to play a leading role in its metaverse ecosystem, but our research shows that safety and reporting tools touted by Meta simply do not work in popular third-party apps. The solution is for Meta to put safety ahead of profit, and set minimum safety standards for apps in its store, for example requiring compatibility with its reporting tools.

These measures include and expand on what Meta has already promised to do to keep VR users safe, and what must be done to deliver on that promise. If Meta fails to do so, the growth of metaverse will be matched by growing calls for safety regulations in VR.